SLS Software Problems Continue at MSFC

Keith’s note: Sources report that Andy Gamble has been allowed to retire and George Mitchell has been reassigned from NASA MSFC QD34 to Engineering. The SLS software team at MSFC is having great difficulty in hiring people to replace those who have quit. There is a lot of internal concern as a result of issues already raised with regard to SLS software safety to date that MSFC will literally have to go back to square one on software so as to verify it for use on human missions.

Keith’s note: Sources report that Andy Gamble has been allowed to retire and George Mitchell has been reassigned from NASA MSFC QD34 to Engineering. The SLS software team at MSFC is having great difficulty in hiring people to replace those who have quit. There is a lot of internal concern as a result of issues already raised with regard to SLS software safety to date that MSFC will literally have to go back to square one on software so as to verify it for use on human missions.

– This Is How NASA Covers Up SLS Software Safety Issues (Update), earlier post

– MSFC To Safety Contractor: Just Ignore Those SLS Software Issues, earlier post

– SLS Flight Software Safety Issues Continue at MSFC, earlier post

– SLS Flight Software Safety Issues at MSFC (Update), earlier post

– Previous SLS postings

Round and round we go, when SLS/Orion stops vampire-sucking other needed programs into oblivion no one knows.

Someone needs to call Dr. Van Hellsing in on this steaming pile ????

If NASA or their contractors are having software QA problems, then maybe they need to look at http://www.testimation.com.

That software is for test estimation. You are suggesting using a hand saw to drive a nail then?

I am suggesting the following:

1. Estimate the number of User Stories contained within the Requirements (typically done by Business Analysts or Developers, not QA people)

2. From the above, use the technology to estimate the number of tests required to validate the User Stories

3. From the above, use the technology to associate the estimate with a level of expected defect-free confidence probability (%)

4. At vendor delivery, input the number of actual tests executed to determine the true level of defect-free confidence & compare to the expected number in point “3” above (also using the technology)

5. If delivered = expected; no hidden defects

I can’t see how that is using a hand-saw to drive a nail.

The defect-free confidence capability is on their landing page, with pictures. If you know their software is for test estimation, then you have visited the site & you have answered your own question.

As NASA does things, there are no “user stories” involved in developing requirements. Your approach seems to imply customers, and customers with experience with a previous version of the product. That’s completely different from NASA’s approach of high level (level 0) goals, which flow down into project-wide requirements (level 1), which flow down to system-level requirements, etc.

I can assure you 110%, at some point, the Requirements are translated to “User Stories”, “Use Cases”, “Test Scenarios” or “Functional Processes”. If NASA is not doing this, then their Software sub-contractors are; this is the ONLY way to turn Requirements into Code into Tests, the only way. This is standard Software-Development-Life-Cycle methodology, globally.

The process you describe above is the normal process when sub-contractors are used. It happens everywhere, absolutely everywhere in organizations much larger & smaller than NASA. NASA, based upon your description, is not innovative in this regard, nothing cutting edge is described above; totally standard stuff.

Typically:

“User Stories” = Agile Methodology

“Use Cases” = Waterfall Methodology

“Test Scenarios + Functional Processes” = Both

A “User Story/ Use Case” defines how a User interacts with the code, to perform some function (e.g. monitor fuel temperature, altitude etc.).

It is very common practice from Banks to Governments to simply list Requirements & have the sub-contracted SDLC Team (often off-shore in India, Vietnam, Thailand, The Philippines etc.) translate them into coding instructions & specifications. This then obviously leads on to the Test Team using them too. All this is done with “User Stories, Use Cases, Test Scenarios & Functional Processes”.

Validation in the Software space requires a test basis. Typically the test basis will take several forms, including Requirements. Normally, we see Requirements mapping to User Stories mapping to Test Scenarios mapping to Test Cases mapping to Test Conditions …. ONLY THEN (or some approximation thereof), is it possible for a Vendor to claim that they have delivered the solution.

The http://www.testimation.com technology goes one step further to include the Defect-Free Confidence Probability into the solution delivered, based upon the amount of testing predicted compared to the amount delivered.

By the way: the reason I keep stating the URL is because I am not aware of the name of their technology. Where I am, we just call it “The Testimator”, but that’s just one branding in their suite of Tools.

They can’t even give the SLS a name! I’ve suggested the seventh planet. But you have to be careful how you pronounce it and in what context!

Wow, this is just a burning dumpster, isn’t it?

And yet they have the gall to human certify this monstrosity for the second launch while making SpaceX jump through ridiculous hoops that SLS would never be able to meet.

Not just Dragon but CST-100 also.

BTW, Boeing needs to replace whoever names their stuff with someone younger than 75. “CST-100” isn’t a name…it’s a model number. On their naming convention, I’m WAH-962. 😉

Or THX 1138? I know this is all about image and marketing, but those can be important things. Is a name better than a model number? I was on N156SY yesterday, and the fact that I had to look that up (and how few people know or care about the tail number on the airplane they fly on) says something about civil aviation. Even for a name for a class of vehicles, a ERJ-175 isn’t so different from CST-100.

But names, not serial numbers, do have some appeal. The US navy does do both (e.g. CV-6 was the _Enterprise_.) I guess it’s a matter of what sort of image you think spacecraft names should project. Is it just one more item off a production line or something unique and special?

Well they’ve gotten away with it with 737, 747, etc. I guess this adopts it into their paradigm and shows that they are more serious about it than I’ve been giving them credit for.

Seems to me that luxury Carmakers do the same thing (Including Saint Elon’s Model 3).

Model 3 was originally named model E, until Ford beat Tesla to trademark Model E for a future Ford hybrid car model.

Yeah, so Ford kill SEXY 🙁

That’s why the full name is Boeing CST-100 Starliner.

OK. I’d forgotten that last part.

In fairness, you are not the only one I’ve spoken to about this issue, so some additional marketing on Boeing’s part would not be frowned upon.

When everyone knows a program is just pork, who wants to work on it? People who like or need pork. Idealism and a sense of higher purpose are left at the door. Meanwhile, SpaceX is hiring.

It is time to pull the plug on SLS.

We use the technology that Bret mentions, quite a bit in the banking sector. It predicts, then measures the Defect-Free Confidence Probability (%) of the solution deployed. It’ll tell you the probability of Defects residing undetected in your systems. I’m surprised that NASA doesn’t use it, I thought they invented it …. ?????

But remember you are dealing with a rocket based on 1970’s technology, SRBs from the Shuttle, RS-25s surplus from the Shuttle, a core stage based on a redesigned Shuttle External Tank. So I expect most of the software is also a legacy from the Shuttle era. So why would they want to introduce a 21st Century software to their 1970’s era rocket?

Because they can? Or because the 1970s software was not designed according to NASA’s current “best practices” for software development used on class A missions? Or maybe they can’t find any any processors which can run software written in Z80 assembly language?

Seriously, do we even know what sort of software we’re talking about? Are the issues in the engine control firmware, or the guidance and navigation system, or what? Legacy software for the engine controllers makes sense since they are suing legacy engines. But guidance and navigation would be completely different since the aerodynamics and launch profile of the vehicle aren’t legacy.

I should clear something up. The http://www.testimation.com software isn’t a control system, it’s Risk Management technology. A User inputs certain information & their technology calculates the probability of defects living within the control system software (for example) that may not have been detected. The more testing is done, the lower the likelihood that defects have been missed. For example, if 2,000 software tests are required for 99(%) confidence of no defects, & only 1,200 tests have been actually done, then their technology informs the User of the percentage probability of undetected defects. Therefore, this technology applies to any situation where software quality is required to be known (which is everywhere these days), so 1970’s or not, it does not matter.

Consider this: unless the military is using Testimation technology, they are deploying software solutions of unknown defect-free confidence into defense strategies.

Consider this: if aircraft manufacturers are not using Testimation technology, we are all flying with avionics of unknown defect-free confidence ….. A chilling thought.

I was being scarcastic 🙂 I would hope they are using new software. However it does illustrate the real difference between the reusable FH and the SLS…

I know. But this is a real, serious issue. Serious enough to turn a billion Euro spacecraft into a fireworks display in that cool, purple color of burning hydrazine and NTO. Reusing old, proven software can be an extremely good idea. But it can also be a disastrous mistake. Figuring out which way to go isn’t a well-understood process and it ends up mattering as much as more discussed things (e.g. hydrogen versus kerosene versus methane as a fuel.)

Just to be clear, NASA does have all sorts of processes in place for this sort of risk assessment and reduction. I suspect that’s actually the problem. Based on past reports, we have no reason to think there is a problem with the SLS flight software. What is very clear is that NASA is not following its own process to make sure there are no problems. I’m not shocked, since the NASA process is very cumbersome. There is a very strong temptation to cut corners and skip steps. That can easily be worse than having a less rigorous process that people actually follow.

But in terms of the commercial software (which someone’s making a lame effort to advertise for free), there are lots of things like that floating around. Some might be better than NASA’s official process (at least I hope so.) But they all suffer from the same flaw as all the other alternative processes which make commercial work different from SLS development. They are not the official, approved, in-house NASA way of doing things.

In truth, I don’t know if they have a software problem or not. It’s hard to tell from second hand information being reported by a non-expert in the field. So, as far as I am concerned, any views I have on the subject are probably wrong …. But you make a very believable point regarding “skipping steps”; this happens everywhere in everything, there’s no reason to think that NASA is going to be different. You’d be shocked to know some of the things I’ve learned (first hand) that federal departments do to get things done on time. So, I take your point.

I’m not sure that it is advertising. I agree that repeating the URL looks like it, but it could just be “his” writing style. It seems to me that “he” is a QA person, so being painfully detailed is a common personality trait. I work in the Software QA Industry & “that person” is very clearly experienced in the field, I can see from “his” language. So unless “that person” is working for “them”, it’s not advertising.

Since this post started, I’ve done some digging & I found that “they” own the technology exclusively, so unless NASA bought it from them directly, it can’t be purchased. Also I found that they have “Patent Pending” on their technology, this means that they’re the first to develop it. Big players like Infosys, Capgemini, Accenture, KMPG, Deloitte & PWC don’t have it because the banks use this technology to measure the vendors (above).

I’ve got 30+ years in QA & we use the technology in the banking sector, but I don’t know how long my current employer has been using it. It works well & it costs the bank a lot of money in licensing fees, so I suspect that it’s a very niche product; maybe it only exists in the banking sector ? …. Unfortunately, losing money seems to be more socially important than losing lives. So maybe the banks are simply more rigorous in their software testing processes than NASA; it would not surprise me.

If NASA cared about SLS they would have made safety a priority.

At the rate they’re going, this software problem might start ruling the timeline and thus threaten the whole program…and maybe DSG with it (because Congress probably won’t fund DSG except as a mission to save SLS).

Worse, is if they now try and rush it (since EM1 is uncrewed) to keep it from ruling the timeline, such that EM1 fails. It’ll take until EM2 to retest EM1. Three more wasteful years of fixed-cost accrual.

Don’t forget to add the year or so of hearings and investigations into why EM1 failed.

That is the three years I was referring to. I seem to recall that was how long the shuttle was down after Challenger.

I think it was two years and seven months (and two years and five months after Columbia.) But EM-2 is currently scheduled for three years after EM-1. Any delay due to an EM-1 failure would be in addition to that. So we’d be talking about 2025 or so before they got to where EM-1 should have gotten them in 2019.

That’s what I had been telling folks too. Then I remembered that the big gap between EM1 and EM2 (used to be 5 years) was to wait for the development of the ESA interstage, or am I remembering that wrong?

I honestly don’t know, because the details of EM-1 and EM-2 have changed so many times. At the moment, they will both fly the European Orion Service Module, so that isn’t responsible for the years between the two flights. EM-2 will use the Exploration Upper State (4 RL-10s…) so that might explain some of the delay. Now they are talking about EM-2 also carrying the first elements of the Deep Space Gateway, so that could be on the critical path and driving the schedule. And now, maybe, Europa Clipper will not launch on a SLS in 2022, which would make a SLS available for EM-2 sooner than previously planned. Or, perhaps, that means flying people on the second rather than the third flight of SLS, so maybe they would want more time for testing. The way plans for SLS keep changing, I just can’t tell what is driving the schedule. It’s hard enough to just keep up with what the schedule actually is…

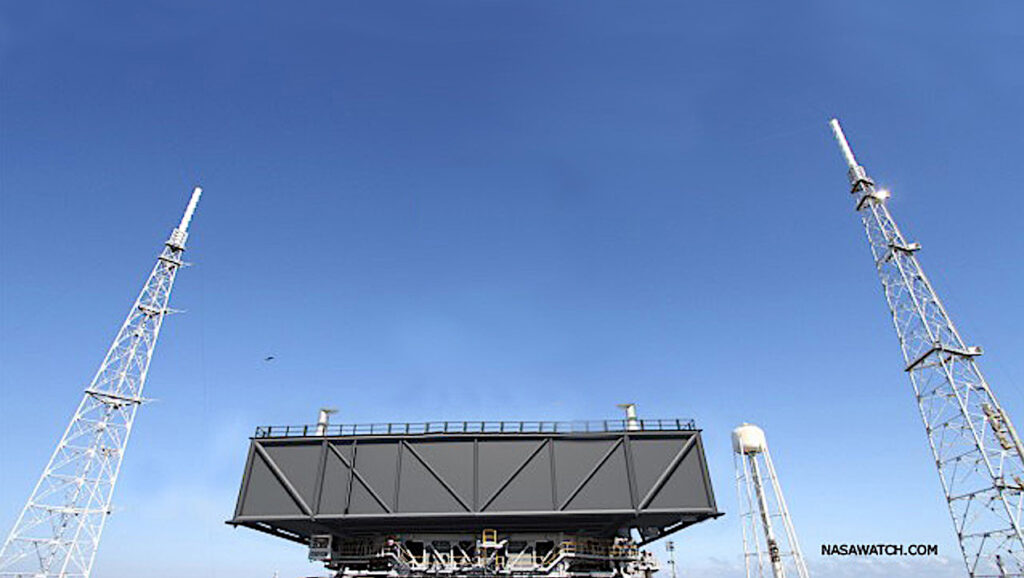

Reconfiguring the MLP is the driver for the 33 month gap between EM-1 and EM-2. It’s also why ASAP has recommended a second MLP – to close that gap.

This disturbs me.

There are folks on this forum, like myself, for whom space is a hobby…who work careers and rub elbows that are far from aerospace and get all of their information from publicly available sources and from folks like you here in the forum and elsewhere who are closer to what goes on.

That the SLS program is so messed up that nobody here can keep track of it is a kick in the teeth. I know enough to know that constantly changing plans add cost and complexity to anything. Added complexity adds new gremlins that have to be hunted down every launch. Added cost takes more money away from robotic Planetary Science missions and other projects that are being pillaged to pay for this thing…programs that advance the science envelope.

The two together sink the future of the program, and the degree of eventual loss when it eventually fails, further into the abyss.

I’m going to make a wild guess and say they don’t have anyone who has successfully led the development of flight and ground support software for a launch vehicle. The last vehicle NASA could have done this for was the Shuttle and I doubt if they have anyone left from that team (I don’t count the ARES I test flight as a success). Ferrite core computer programming anyone?

If the program continues they should contract this out to some entity that has successfully done this kind of work in the past few decades like ULA has with Atlas V and Delta IV or SpaceX with Falcon 9 and Falcon Heavy. The question then becomes would any company want this head ache.

To be fair, writing flight software is a bit different from most sorts of software development. Running faster most of the time, for example, isn’t a virtue if it means running long a tenth of a percent of the time. (And, if you are old enough to remember the joke, “I am Pentium of the Borg. Division is futile. You will be approximated,” that’s also fine for most commercial applications but totally off the table for flight software.)

Anyway, I’m confident ULA would do almost anything NASA cared to pay them to do. But you’re getting to core of the whole SLS program. It’s a NASA program, not a NASA-supervised effort with all the actual work contracted out. So, naturally, they are doing the flight software development in house. We could debate whether or not that’s a good idea, but the same debate would apply to all the other aspects of SLS which NASA is doing in house.

I can’t see how writing flight software is any different from any other form of software. I’ve QA’d software in finance, utilities, government, food, retail, air traffic control & many others I’ve forgotten over the years, & they have all followed the same SDLC & STLC. How is writing flight software any different ? …. Please explain, I’m curious to know

Much as I often defend the need for uniquely big vehicles like SLS, given the wealth of software expertise available in the private sector – including on commercial airlines – why the hell is the MSFC Software team doing anything other than writing requirements and validating what the commercial provider delivered?

Interesting you should mention that. What are Requirements ? Often with clients (Pres. Kennedy), “Fly to Moon” is a very simple requirement, anyone can understand this; but it took 1/3 of a million people a decade to deliver this requirement. Often, requirements themselves are the problem because they are written by business people, not QA people. No definition of what constitutes a requirement is ever specified on a project & this is where the problem begins. Some definition such as “a single sentence statement which cannot or should not be further decomposed” should be used from the beginning. Personally, my advice is to use Test Conditions as the preferred form of Requirements, but trying to get someone with little or no understanding of Software QA to understand this is extremely difficult.

Even with Requirement-Traceability-Matrices (mapping tests to Requirements), how does one know if undiscovered defects exist? You can fix the defects you find, but how do you gain confidence about the overall solution & what you don’t know? Imagine if drug companies released products with an unknown expected failure rate; we would all be too scared to take medication. However, many, many, many companies release software of unknown Defect-Free Confidence; NASA it seems, is no different.

I would expect that they do not even know the number of required tests because this is not a parameter that forms part of any bid submissions, but it is actually possible to determine using the http://www.testimation.com technology. From this, they can determine the level of Defect-Free Confidence delivered from the number of tests actually executed.

So, to directly answer your question, absolutely NASA should be involved with more than just the requirements & validating that they have been commercially delivered. They should ALSO know the level of Defect-Free Confidence that IS TO BE DELIVERED, & the actual level delivered at the end of the day by the vendor. All of this can be specified well in advance of any contracts being signed if they engaged http://www.testimation.com

Quite frankly, I’m starting to think that they don’t know about this technology; assuming that the story we’re all commenting on is factually correct. Perhaps Mr. Cowing should approach NASA about whether or not they use the Testimation technology ? …. If they do, then all of my words are silly. If they are not using it, then maybe they need to engage the company.

Many don’t know that Ares I-X used a version of flight software/avionics from Atlas V. NASA wanted LM to give them the Atlas V code because they knew the software team and related elements @ MSFC could not do the job. LM said no. So NASA pushed forward with people who had never written one line of flight software giving it the old civil servant try. That team is still in place today. If LM had said yes and given up a $600,000,000 dollar investment to NASA (for free) they would have used it on SLS. NASA MSFC should not be writing one line of executable code. The job should go to industry.

Haven’t we flown a big rocket before. It’s not like F no longer equals M x A? My goodness, aren’t there enough algorithms out there? PEG anyone?

We’ve flown and lost enough rockets to know it’s more than just F=m a. We even know how it’s more than that (atmospheric torques, bending of the stack, oscillatory modes, irregularities in engine performance, etc.) Writing control software for all of that, and testing the code, isn’t easy and has to be done for any new launch vehicle. This is all about whether or not the SLS program is doing it in a good way.

The key problem is attacking, blackballing and suppressing anyone who finds a problem with the flight s/w. That’s nuts! NASA Safety Mgt. suppressing problems. That alone is a reason to close shop. How does NASA make whole the people they damaged, the careers they destroyed, and the impacts to families all for doing the job right? How dose MSFC show its workforce doing the right thing is accepted again.

How does MSFC win back the trust of the taxpayer?

SLS has an unofficial name – Longshot

I know nothing of the inner workings/politics at MSFC, but it appears that some folks at NASA think MSFC’s Safety and Mission Assurance Directorate is doing a good job. In July 2017, George Mitchell and 5 others (including contractors) in that directorate received the NASA Silver Achievement Medal. There were also a number of other awards to folks in that directorate including the Distinguished Service Medal, Exceptional Service Medal, Exceptional Engineering Achievement Medal, etc.:

https://www.nasa.gov/sites/…

Having worked at NASA as both a contractor and a civil servant let me tell you that awards are regularly given for reasons other than excellence.

Yes, as is the case in any area of human endeavor where awards or recognition is given. I imagine it’s also the case that sources might paint a picture that deviates from objectivity. Usually the truth lies somewhere in between…

As fcrary posted, I have little doubt that the required process is onerous and some steps are skipped. One just hopes that the process actually followed is based on experience and expertise, and not expedience.